Software is changing with AI faster than almost everything else. The gap is obvious if you look at it. Less obvious is why, and what it means for the fields that are lagging.

If you spend time around developers, the shift already feels obvious. The tools keep getting better. People who were skeptical a year ago now use them every day. Workflows that felt experimental now feel normal. And every few months, the ceiling moves again.

If you look at healthcare, the feeling is completely different. There is interest, there is excitement, there are pilots, there are demos, but the actual day-to-day reality still feels much earlier.

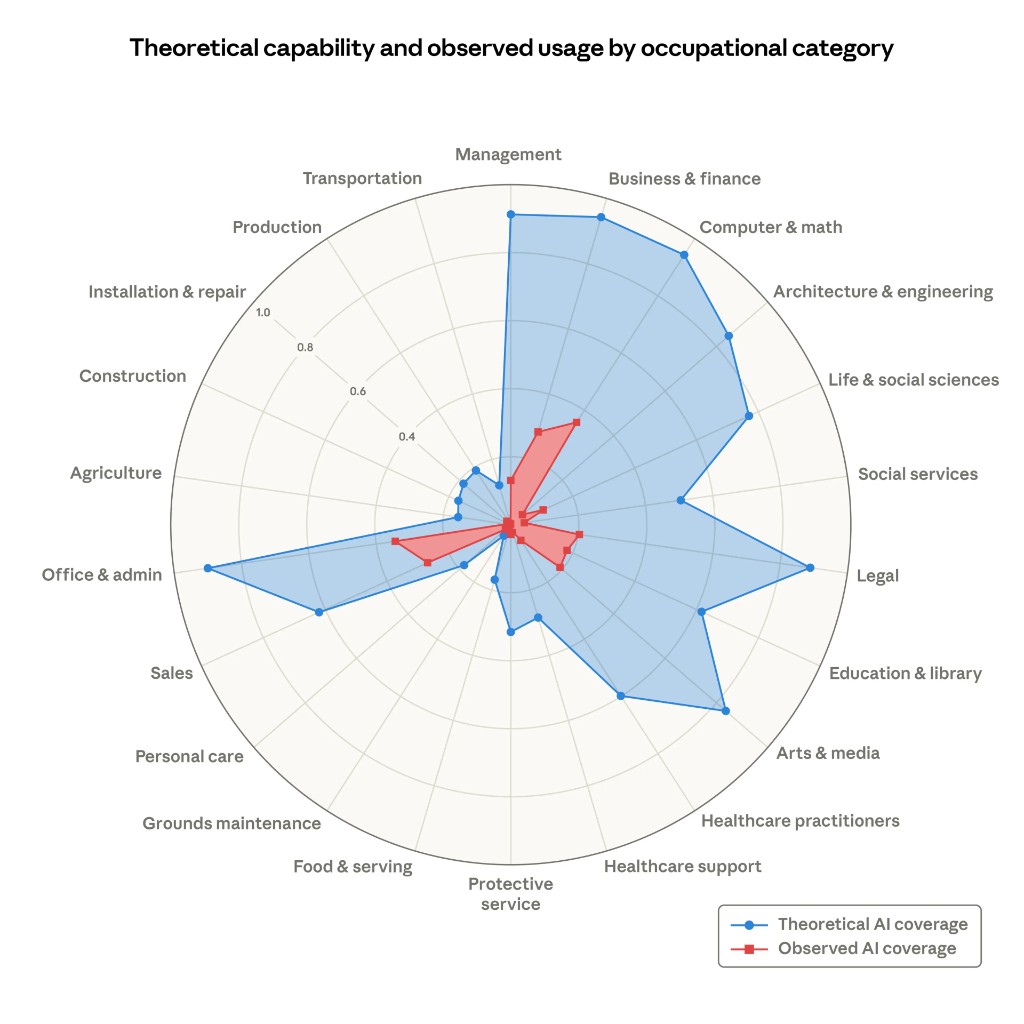

Anthropic's recent labor market paper gives a clean way to see this. It separates what AI could theoretically do from what people are actually doing with it. In software, the gap between those two numbers is large but closing fast. In healthcare, the second number barely registers.

It is tempting to explain this with the usual answers. Healthcare is regulated. The stakes are higher. Liability is messy. Data is fragmented. All of that is true. But I don't think those explanations get to the center of it.

The deeper reason software is moving first is that software is uniquely good at starting an adoption flywheel.

That matters more than raw capability.

People often talk about AI adoption as if it were mostly a question of whether the models get good enough. Once they cross some threshold, the industry changes. But that framing leaves out something important. Adoption is not just about what the model can do. It is about whether the industry can build the surrounding tools, habits, and trust needed to get more and more out of it over time.

Software has been unusually good at this.

The first generation of AI coding tools was not amazing. They were inconsistent, shallow, and often wrong in annoying ways. But they were good enough to show the shape of the future. That was enough. Developers started using them anyway. Then they built better prompts, better IDE integrations, better evals, better workflows, better guardrails, better ways of dividing work between the human and the model. The tools improved, which made people use them more, which created more feedback, which justified building even more tooling around them.

That is the flywheel.

You do not need perfection to start it. You just need enough value in the first iteration that people want to push for a second one.

Software got there early.

Healthcare mostly has not.

Software is not just another industry adopting AI. It is also the industry that builds the tools every other industry will use to adopt AI.

That makes it different from almost everything else on that chart.

If healthcare adopts AI faster, that is important for healthcare. It may improve documentation, triage, follow-up, diagnosis support, and administrative workflows. But it does not do much to accelerate AI adoption in legal, finance, education, logistics, or manufacturing.

If software adopts AI faster, it accelerates everything.

As the cost of producing software drops, the cost of building AI-native infrastructure for every other industry drops with it. You can build more copilots, more workflow tools, more orchestration layers, more interfaces, more internal systems, more testing harnesses, more monitoring, more integration glue. Even if the model itself does not improve, the industry around the model becomes much better at extracting value from it.

That is why software feels like the frontier, but also something more than the frontier. It is a meta-category. Progress there compounds into progress elsewhere.

In that sense, software is the first industry to change, but it is also the delivery mechanism through which AI reaches the others.

That has a big implication for healthcare.

If you want to imagine what the future of AI in healthcare might look like, it is not enough to ask what the models will eventually be able to do. You also have to ask what software abundance will make possible around them.

The reason AI feels so useful in software is not just that models can write code. It is that an entire stack grew around that fact. There are editors designed for it, agent workflows designed for it, testing environments designed for it, version control and deployment practices that make experimentation cheap, and a culture that tolerates iteration. Developers can try something, see the result quickly, fix it, try again, and build on what worked.

Healthcare has much less of that.

A doctor does not work inside a clean, AI-native environment. They work inside a mess of EHRs, billing rules, fragmented histories, scanned PDFs, clinical risk, institutional policy, and human trust. The problem is not only that medicine is harder. It is that the software substrate around medicine is much worse.

This is one reason conversations about AI in healthcare often feel strangely abstract. People jump straight to the most ambitious use cases: diagnosis, treatment planning, replacing specialists, fully autonomous care. But that is not how adoption usually starts. Adoption starts where the flywheel can start.

In software, that happened surprisingly early because bad first drafts of code were still useful. If the result was wrong, you could test it, inspect it, revise it, and keep going. The loop was fast. The gains were visible. Every iteration created pressure for another iteration.

Healthcare needs its own version of that.

It needs places where AI can be useful enough, safe enough, and integrated enough that people want to build the next layer. Documentation is the clearest example — and in a few places it is already working. Ambient AI tools that transcribe and draft clinical notes are deployed at scale now. That may seem modest, but it is exactly how the software flywheel started: not with the hardest problem, but with a problem where fast feedback was possible. Doctors who use these tools want better chart summaries next. Better chart summaries create pressure for better care gap identification. Each step creates surface area for the next one.

Chart review, administrative coordination, prior authorizations, patient communication, triage support — all of these are the same kind of starting point. Not the final destination, but plausible places where better tooling can create immediate value, and where that value can justify the next layer of integration.

The question is not whether healthcare eventually reaches some theoretical ceiling. The question is whether healthcare can create the conditions for compounding adoption.

Software did not adopt AI quickly because developers are more visionary, or because the work is more important, or because regulation is lighter. It adopted AI quickly because it had the ingredients for compounding. The work was already digital. The feedback loops were fast. The cost of experimentation was low. The surrounding tooling could be built by the same industry that was using the models. Progress in the core task immediately improved the ability to build more of the infrastructure around the core task.

Healthcare has weaker versions of all of those things. Which means it will not get the same kind of adoption for free.

But it also means the opportunity is more specific than people usually think.

The opportunity is not simply “apply AI to healthcare.” The opportunity is to build the software layer that allows healthcare to start iterating with AI the way software already does.

That is a more grounded goal. It is also, I think, the real one.

It is, not coincidentally, the problem we are working on at Ecaresoft.

Because the future of AI in healthcare will not be determined only by model capability. It will be determined by whether we can build enough of the surrounding system that each small success creates pressure for the next one.

Software has already shown what that looks like. First the tool is a toy. Then it is useful in narrow cases. Then people reorganize their work around it. Then new tools appear to make the old tool better. Then the whole environment changes.

That is the part other industries should pay attention to.

Not just that software is ahead, but why it is ahead.

Software goes first because software is where the flywheel can start earliest. And once it starts there, it does not stay there. It spills outward, because software is also the thing every other industry now depends on to modernize itself.

That may turn out to be one of the most important asymmetries in this whole transition.

AI in software is not just one category moving faster than the others.

It is the category that helps all the others move.